If you are considering implementing DFS replication, consider using Windows 2012 R2 because DFS replication has been massively improved. It supports larger data sets and performance has dramatically been improved over Windows 2008 R2.

I've implemented DFS replication to keep two file servers synchronised. Click here if or there you want to learn more about DFS itself.

With DFS, I wanted to create a high-available file server service, based on two file servers, each with their own physical storage. DFS replication makes sure that both file servers are kept in sync.

If you setup DFS, you need to copy all the data from the original server to the secondary server. This is called seeding and I've used robocopy as recommended by Microsoft in the linked article.

Seeding is not mandatory. You can just start with an empty folder on the secondary server and just have DFS replicate all files. I've experienced myself that DFS replication can be extremely slow on Windows 2008 R2.

Once all files are seeded and DFS is configured, the initial replication can still takes days. Replication times are based on:

- the number of files

- the size of the data

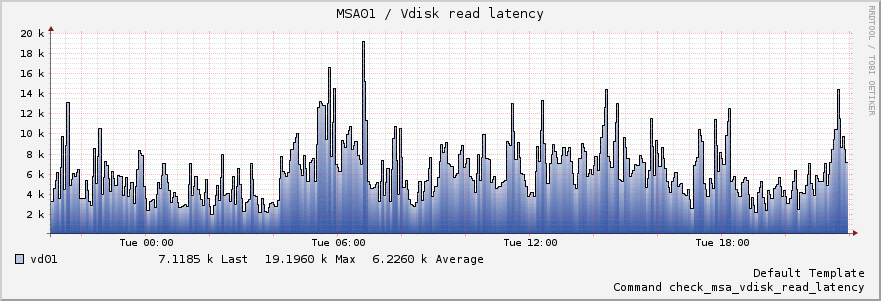

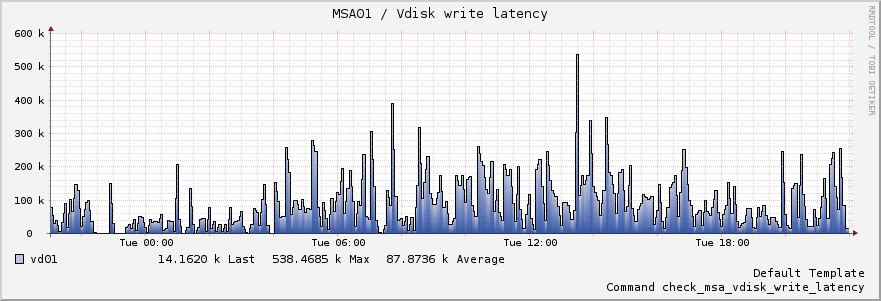

- the performance of the disk subsystems of both source and destination

Note: windows 2012 R2 improves DFS replication dramatically, only more reason to upgrade your file servers to 2012 R2 or higher.

If you seed the files, DFS will not transfer files if they are identical, thus this saves bandwidth and time. DFS checks if files differ based on their hash. So even if you seed all data, the initial replication can take a while.

On our virtualised platform, the initial replication of 2.5 GB of data consisting of about five million files took about a full week. To me, that is not a very desirable outcome, but once the initial replication is done, there is no performance issue and all changes are nearly instantly replicated to the secondary server.

For the particular configuration I've setup, the performance storage subsystem could contribute to the slow initial replication.

To speed up the replication process, it's important that you install the latest version of robocopy for Windows 2008 R2 on both systems. There is a bug in older versions of robocopy that do not properly set permissions on files. This results in file hash mismatches, causing DFS to replicate all files, nullifying the benefit of seeding.

Hotfixes for Windows 2008 R2:

Hotfixes for Windows 2012 R2:

To verify if a file on both servers has identical hashes, follow these instructions

If you've checked a few files and assured that the hashes are identical, it's ok to configure DFS replication. If you see a lot of Event ID 4412 messages in the DFS Replication event log, there probably is an issue with the file hashes.