Linux Bonding

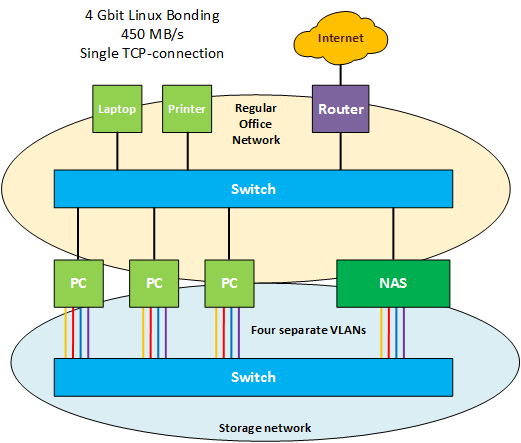

In this article I'd like to show the results of using regular 1 Gigabit network connections to achieve 450 MB/s file transfers over NFS.

I'm again using Linux interface bonding for this purpose.

Linux interface bonding can be used to create a virtual network interface with the aggregate bandwidth of all the interfaces added to the bond. Two gigabit network interfaces will give you - guess what - two gigabit or ~220 MB/s of bandwidth.

This bandwidth can be used by a single TCP-connection.

So how is this achieved? The Linux bonding kernel module has special bonding mode: mode 0 or round-robin bonding. In this mode, the kernel will stripe packets across the interfaces in the 'bond' like RAID 0 with hard drives. As with RAID 0, you get additional performance with each device you add.

So I've added HP NC364T quad-port network cards to my servers. Thus each server has a theoretical bandwidth of 4 Gigabit. These HP network cards cost just 145 Euro and I even found the card for 105 Dollar on Amazon.

With two servers, you just need four UTP cable's to connect the two network interfaces and you're done. This would cost you ~300 Euro or ~200 dollar in total.

If you want to connect additional servers, you need a managed gigabit switch with VLAN-support and sufficient ports. Each additional server will use 4 ports on the switch, excluding interfaces for remote access and other purposes.

Managed gigabit switches are quite inexpensive these days. I bought a 24 port switch: HP 1810-24G v2 (J9803A) for about 180 euros (209 Dollars on Newegg) and it can even be rack-mounted.

Using VLANS

So you can't just use a gigabit switch and connect all these quad-port network cards to a single VLAN. I tested this scenario first and only got a maximum transfer speed of 270 MB/s while copying a file between servers over NFS.

The trick is to create a separate VLAN for every network port. So if you use a quad-port network card, you need four VLANs. Also, you must make sure that every port on every network card is in the same VLAN. For example, port 1 on every card needs to be in VLAN 21, port 2 in VLAN 22, and so on. You also must add the appropriate switch port to the correct VLAN. Last, you must add the network interfaces to the bond in the right order.

So why do you need to use VLANs? The reason is quite simple. Bonding works by spoofing the same hardware or MAC-address on all interfaces. So the switch sees the same hardware address on four ports, and thus gets confused. To which port should the packet be sent?

If you put each port in it's own VLAN, the 'spoofed' MAC-address is seen only once in each VLAN. So the switch won't be confused. What you are in fact doing by creating VLANs is creating four separate switches. So if you have - for example - four cheap 8-port unmanaged gigabit switches, this would work too.

So assuming that you have four ethernet interfaces, this is an example of how you can create the bond:

ifenslave bond0 eth1 eth2 eth3 eth4

Next, you just assign an IP-address to the bond0 interface, like you would with a regular eth(x) interface.

ifconfig bond0 192.168.2.10 netmask 255.255.255.0

Up to this point, I only achieved about 350 MB/s. I needed to enable jumbo frames on all interfaces and the switch to achieve 450 MB/s.

ifconfig bond0 mtu 9000 up

Next, you can just mount any NFS share over the interface and start copying files. That's all.

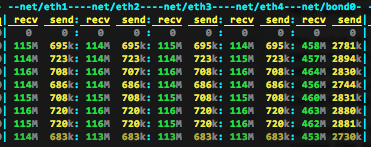

Once I added each interface to the appropriate VLAN, I got about 450 MB/s for a single file copy with 'cp' over NFS.

I did not perform a 'cp' but a 'dd' because I don't have a disk array fast enough (yet) that can write at 450 MB/s.

So for three servers, this solution will cost me 600 Euro or 520 Dollar.

What about LAGs and LACP?

Don't configure your clients or switches for LACP, it doesn't give you the speed benefit and it's not required.

10 Gigabit ethernet?

Frankly, it's quite expensive if you need to connect more than two servers. An entry-level 10Gbe NIC like the Intel X540-T1 does about 300 Euros or 450 Dollar. This card allows you to use Cat 6e UTP Cabling. (Pricing from Dutch Webshops in Euros and Newegg in Dollars).

With two of those, you can have 10Gbit ethernet between two servers for 600 Euro or 700 Dollar. If you need to connect more servers, you would need a switch. The problem is that 10Gbit switches are not cheap. An 8-port unmanaged switch from Netgear (ProSAFE Plus XS708E) does about 720 Euro's or 900 Dollar.

If you want to connect three servers, you need three network cards and a switch. So three network cards and a switch will cost you 900 Euro (1050 Dollar) for the network cards and 720 Euro (900 Dollar) for the switch, totalling 1800 euro or 1950 Dollar.

You will get higher transfer speeds, but at a significantly higher price.

For many business purposes this higher price can be easily justified and I would select 10 Gb over 1 Gb bonding in a heart-beat. Less cables, higher performance, lower latency.

However, bonding gigabit interfaces allows you to use off-the-shelf equipment and maybe a nice compromis between cost, usability and performance.

Operating System Support

As far as I'm aware, round-robin bonding is only supported on Linux. Other operating systems do not support it.

Comments